产品服务等级协议SLA

协议生效时间: 2019 年 01 月 15 日

本服务等级协议(Service Level Agreement,简称“SLA”) 规定了百度智能云向用户提供的内容分发网络服务CDN(Content Delivery Network,以下简称“CDN”)的服务可用性等级指标及赔偿方案。

1. 定义

服务周期:一个服务周期为一个自然月。 服务区域:中国大陆地区(不含港澳台地区)。 服务周期总分钟数:按照每月每周七(7)天每天二十四(24)小时计算。 错误请求:CDN将HTTP状态码为5XX和因为CDN服务故障导致的用户正常请求未能到达CDN服务器端的请求视为错误请求。 有效请求:客户在百度智能云服务账号下所有CDN的域名加速服务,在CDN服务器收到的请求视为有效请求。 每5分钟错误率:

月度服务费用:用户在一个自然月中就CDN所支付的服务费用总额。

2. 服务可用性

2.1 服务可用性计算公式

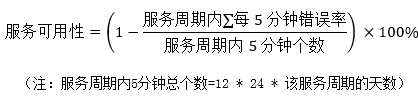

CDN服务可用性按服务周期统计,根据服务周期内每5分钟错误率之和除以服务周期内5分钟的总个数计算出每5分钟错误率的平均值,从而计算得出服务可用性,即:

2.2 服务可用性承诺

CDN服务可用性承诺在一个服务周期内不低于99.90%,如CDN未达到上述服务可用性承诺,用户可以根据本协议第3条约定获得赔偿。

赔偿范围不包括以下原因所导致的请求失败或服务不可用时间:

(1) 百度智能云预先通知用户后进行系统维护所引起的,包括合理升级、变更、停机、割接、维修和模拟故障演练;

(2) 任何百度智能云所属设备以外的网络、设备故障或配置调整引起的;

(3) 用户内容违规或其他原因导致域名被封禁而产生的错误;

(4) 用户大规模流量突发增长未提前书面告知百度智能云所导致的可用性降低;

(5) 用户的应用程序或数据信息受到黑客攻击而引起的;

(6) 用户维护不当或保密不当致使数据、口令、密码等丢失或泄漏所引起的;

(7) 用户的疏忽或由用户授权的操作所引起的;

(8) 不可抗力以及意外事件引起的;

(9) 其他非百度智能云原因所造成的不可用;

(10) 用户未遵循百度智能云产品使用文档或使用建议引起的。

3. 赔偿方案

3.1 赔偿标准

根据用户某一百度智能云账号下CDN的月度服务可用性,按照下表中的标准计算赔偿金额。赔偿方式仅限于用于购买百度智能云CDN产品的代金券,且赔偿总额不超过未达到服务可用性承诺的当月用户就该账号下CDN支付的月度服务费用的50%。

| 服务可用性 | 赔偿代金券金额 |

|---|---|

| 低于99.90%但等于或高于 99.00% | 月度服务费用的 10% |

| 低于99.00%但等于或高于 95.00% | 月度服务费用的 25% |

| 低于 95.00% | 月度服务费用的 50% |

3.2 赔偿申请时限

用户可以在每月第五(5)个工作日后对上个月没有达到可用性的服务提出赔偿申请。赔偿申请必须限于在CDN没有达到可用性的相关月份结束后两(2)个月内提出。超出申请时限的赔偿申请将不被受理。

4.其他

(1) 在法律法规允许的范围内,百度智能云负责对本协议进行解释说明。

(2) 百度智能云有权对本SLA条款作出修改。如本SLA条款有任何修改,百度智能云以网站公示或发送邮件的方式通知您。如您不同意百度智能云对SLA所做的修改,您有权停止使用CDN服务,如您继续使用CDN服务,则视为您接受修改后的SLA。

(3) 本协议项下百度智能云CDN服务对于用户所有的通知均可通过网页公告、站内信、电子邮件、手机短信或其他形式等方式进行;该类通知于发送之日视为已送达收件人。百度智能云不对用户承担因此产生的任何损失。

(4) 本协议的订立、执行和解释及争议的解决均应适用中国法律并受中国法院管辖。如双方就本协议内容或其执行发生任何争议,双方应尽量友好协商解决;协商不成时,任何一方均可向北京市海淀区人民法院提起诉讼。

(5) 本协议构成双方对本协议之约定事项及其他有关事宜的完整协议,除本协议规定的之外,未赋予本协议各方其他权利。

(6) 如本协议中的任何协议无论因何种原因完全或部分无效或不具有执行力,本协议的其余协议仍应有效并且有约束力。

(7) 关于用户约束条款,详见用户服务协议中的"用户的权利与义务"相关条款内容。

(8) 关于服务商免责条款,详见用户服务协议中的"免责声明"相关条款内容。