Use the BSC to Import the BOS Data to the Es

Last Updated:2020-11-17

Introduction

This document mainly introduces how to import data from BOS (Baidu Object Storage System) to the Es through the BSC (Baidu Streaming Computing Service).

Upload the Data to the BOS

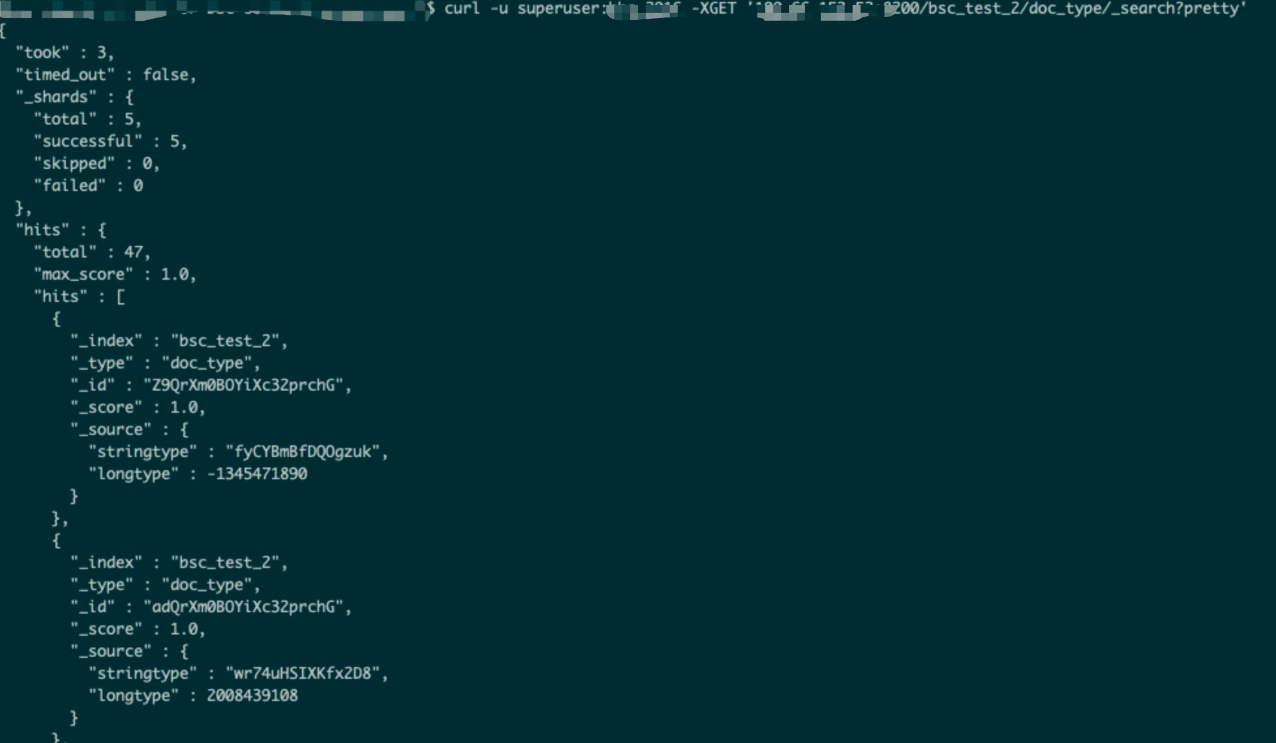

Log in to the management console, enter the BOS product interface, create “bucket”, and then upload the test file:

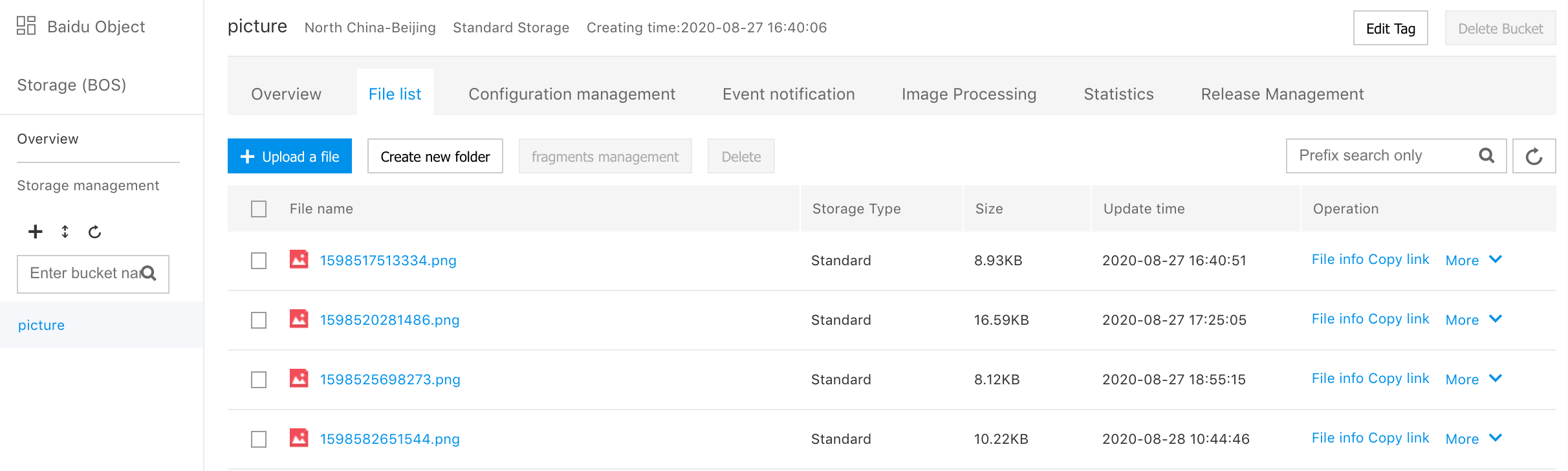

The content of the test file is as follows:

Edit a BSC Job

Create a BOS source

Enter the BSC edit job interface, and create a “bos source table”. The “sql” code is as follows:

CREATE table source_table_bos(

stringtype STRING,

longtype LONG

) with(

type = 'BOS',

path = 'bos://es-sink-test/test',

encode = 'json'

);Where, the path is the “bos” path specified in the red box in the above figure, and add the prefix “bos://” before the path.

Create an Es Sink Table

The “sql” code is as follows:

create table sink_table_es(

stringtype String,

longtype Long

)with(

type = 'ES',

es.net.http.auth.user = 'superuser',

es.net.http.auth.pass = 'bbs_2016',

es.resource = 'bsc_test_2/doc_type',

es.clusterId = '296245916518715392',

es.region = 'bd',

es.port = '8200',

es.version = '6.5.3'

);Where:

- “Es.resource” corresponds to the index and type of “es”. “Es” automatically creates the specified index when “bsc” writes data.

- “Es.clusterId” corresponds to the cluster ID of “es”.

- “Es.region” indicates the code of the region for the “Es” service. You can refer to the “Es” service region code to query the correspondence between the region and code.

Edit an import statement

The “sql” statement is as follows:

insert into

sink_table_es(stringtype, longtype) outputmode append

select

stringtype,

longtype

from

source_table_bos;

Save a job, release and run the job

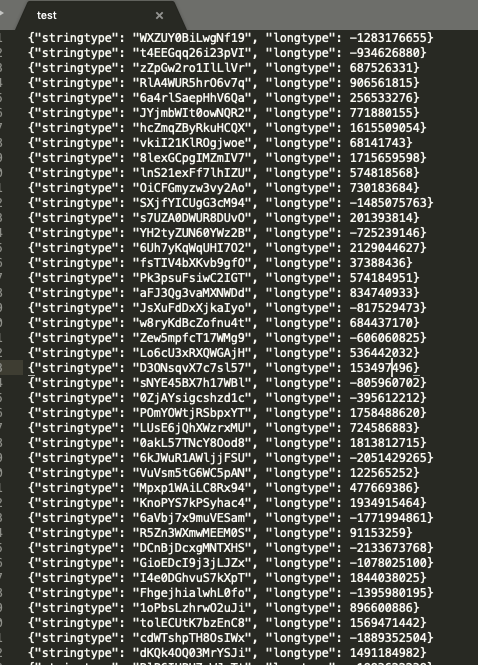

Show the Data in the Es